AI is everywhere in education right now. Every edtech company has an "AI-powered" something. Every conference panel is debating whether ChatGPT will replace teachers. And in SEND education, where personalisation has always been the goal but rarely the reality, AI feels like it could be genuinely transformative.

Some of it is. Some of it is hype. Here's our honest take.

Where AI genuinely helps

Personalised pacing. One of the hardest things about teaching neurodivergent learners in traditional settings is that everyone learns at different speeds. AI-driven learning platforms can adapt in real time — slowing down when a concept isn't landing, skipping ahead when it is, and adjusting the difficulty curve to keep the learner in that sweet spot between bored and overwhelmed. This isn't theoretical; tools like this exist now and they work.

Assistive technology. Text-to-speech that actually sounds natural. Speech-to-text that handles unusual speech patterns. Real-time captioning. Reading rulers that adjust contrast and spacing. AI has made assistive tech better, cheaper, and more available than ever before. For young people with dyslexia, processing differences, or communication needs, this stuff is life-changing.

Reducing admin burden. A huge amount of SEND provision time gets eaten by paperwork — writing reports, tracking progress, updating EHCPs, logging sessions. AI can help draft these, spot patterns in data, and flag when something needs attention. That means more time for the actual young person and less time filling in spreadsheets.

Communication support. AI-powered AAC (augmentative and alternative communication) devices are getting remarkably good at predicting what a non-verbal or minimally verbal young person wants to say. Eye-tracking, gesture recognition, and predictive text all combine to give people a voice who previously struggled to be heard.

Where it gets tricky

The human connection problem. Here's the thing about SEND education that AI developers often miss: the relationship IS the intervention. When a young person who hasn't engaged with education for two years finally opens up to their mentor, that didn't happen because of an algorithm. It happened because a human being showed up, consistently, and earned their trust.

AI can supplement that relationship. It cannot replace it. A young person with severe anxiety doesn't need a chatbot telling them to try breathing exercises. They need a human who notices they're struggling before they even say anything.

Bias and representation. AI models are trained on data, and data reflects the world as it is — not as it should be. SEND is already an area rife with disparities: girls with autism are diagnosed later, Black Caribbean boys are more likely to be excluded, working-class families get fewer resources. If an AI system is trained on historical data, it risks baking in those same biases. "The algorithm said so" is not an acceptable reason for a young person to miss out on support.

The "personalisation" that isn't. Some edtech products slap "AI-personalised" on what is essentially a branching quiz with slightly different question orders. Genuine personalisation for SEND learners means understanding sensory needs, emotional state, communication preferences, special interests, and a hundred other factors that a standardised platform can't meaningfully capture.

Data and privacy. SEND data is among the most sensitive information that exists. Medical diagnoses, behavioural incidents, family circumstances, mental health records — all of this is being fed into AI systems with varying levels of security and transparency. Parents deserve to know exactly what data is being collected, who has access, and what it's being used for.

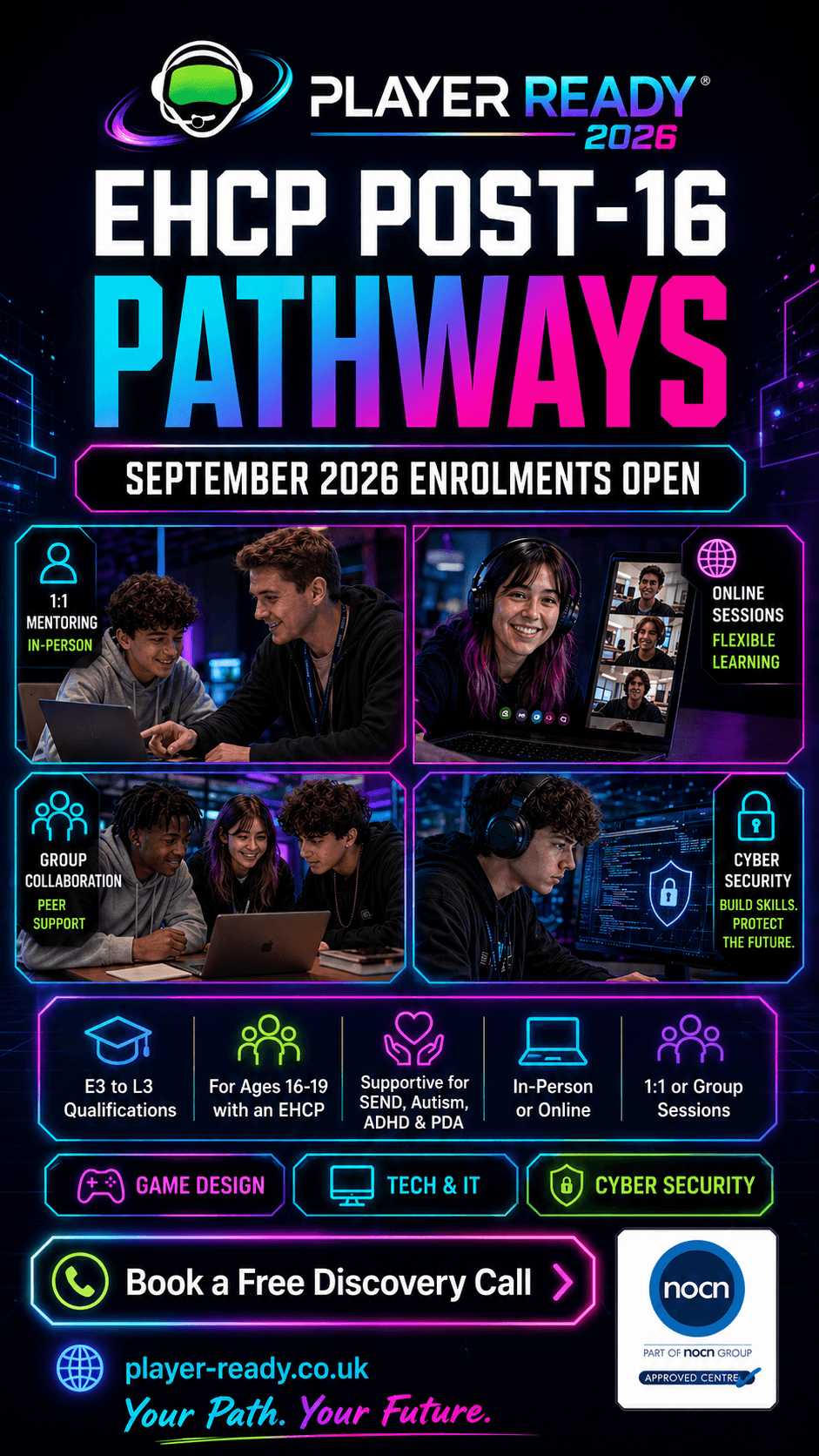

How we think about it at Player Ready

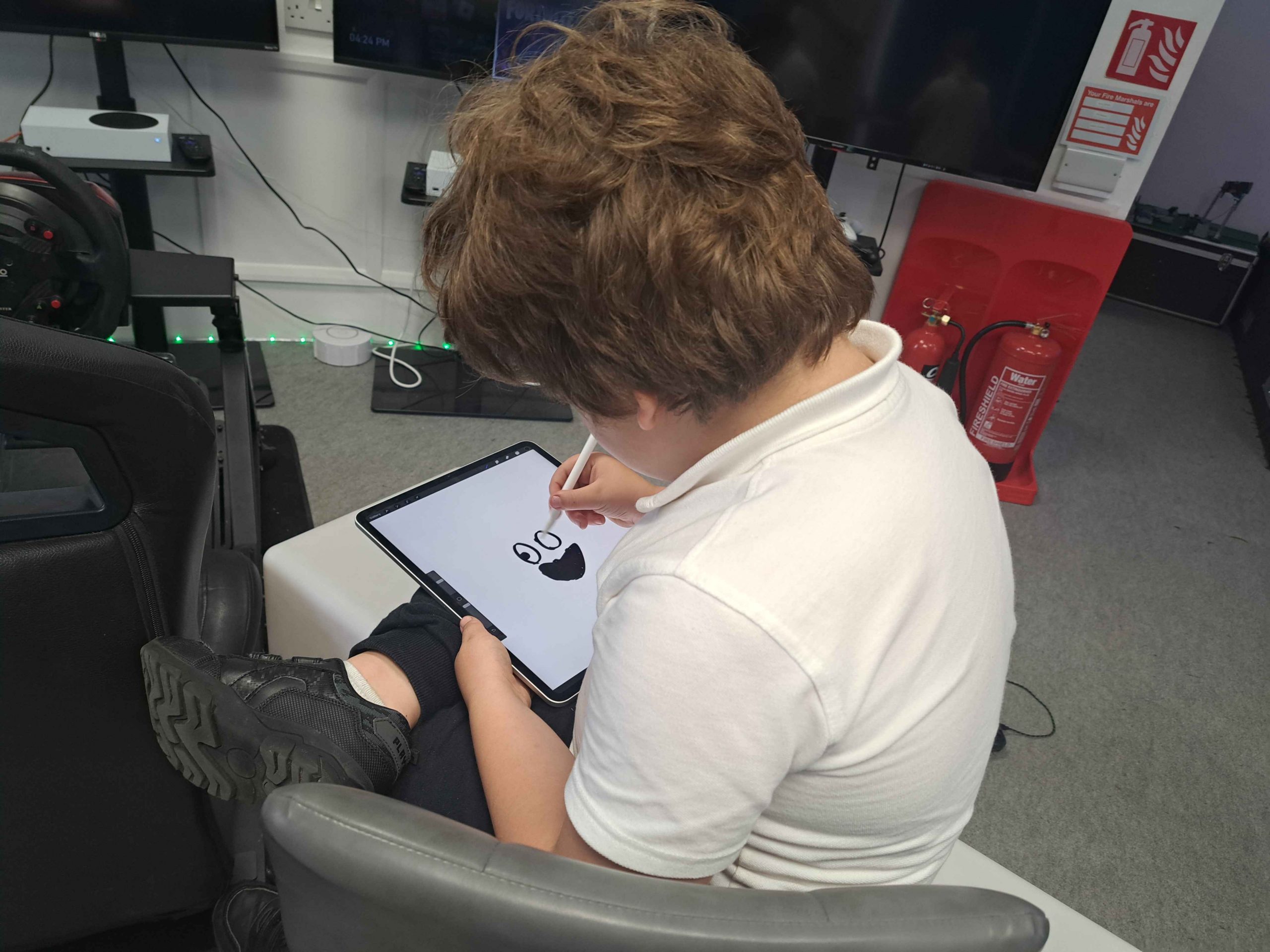

We use technology constantly in our alternative provision work. Gaming, coding, creative tools — technology is central to what we do. And yes, we use AI-powered tools where they genuinely help.

But we're clear-eyed about what technology is for and what it isn't for. Technology is brilliant at creating engaging learning environments, providing instant feedback, adapting content, and removing barriers. It's not a substitute for a mentor who knows that Tuesday mornings are always hard for a particular young person, or who remembers that last week's session ended badly and today needs to start gently.

Our approach is simple: technology serves the relationship, never the other way around. A mentor might use an AI tool to help create a personalised coding challenge. They wouldn't hand a young person a tablet and leave an AI to do the mentoring.

What parents and professionals should ask

When an education provider talks about using AI, here are the questions worth asking:

- What specific problem does this AI solve that couldn't be solved another way?

- How much human oversight is there? Who checks the AI's recommendations?

- What data is being collected, and where is it stored?

- Has this been tested with neurodivergent users specifically, or just adapted from mainstream tools?

- Does the young person have any say in how technology is used in their sessions?

AI in SEND education isn't good or bad. It's a tool. And like any tool, its value depends entirely on who's wielding it, why, and whether they remember that the young person in front of them is a human being first and a data point never.

If you'd like to see how Player Ready blends technology with genuine human mentoring, get in touch. We're always happy to talk about what we do and why.